In short

- Apple CEO Tim Cook warned that the Mac mini and Mac Studio could remain in short supply for “a few months” after demand for AI-powered devices exceeded the company’s forecasts.

- OpenClaw – an open-source AI platform powered by OpenAI – turned Apple’s memory systems into standard tools for running large-scale native AI models.

- Apple’s M4 Ultra supports up to 192GB of integrated memory, allowing developers to run models that may not fit on a single consumer Nvidia GPU, which comes out at 32GB of VRAM.

Apple’s Mac mini has always been quiet, forgotten in the back of the Apple Store. Effective, affordable by Apple standards, and largely overlooked by the AI community. Then OpenClaw happened.

On Thursday, Tim Cook told analysts that the Mac mini and Mac Studio are sold out—and may be for months. “These are all amazing AI platforms and tools,” he said Apple’s Q2 2026 earnings“and customer awareness is happening faster than we predicted.”

Interpretation: Apple has misjudged how much manufacturers will want these machines, especially during a time when markets are in short supply.

Mac money came $8.4 billion for the quarter, up 6% year-on-year. Not exactly an explosion – but the constraints, not the need, are what’s holding them back. High-RAM Mac Mini and Mac Studio configurations aren’t just slow; others have been removed from the Apple Store entirely.

The $599 base Mac mini is sold in the US without containers or in-store pickups. Upgrades with 64GB of RAM show waiting times of 16 to 18 weeks. Mac Studio models with 512GB of integrated memory disappeared from the store completely. Scalpers on eBay caught on quickly, listing the original listings for almost double the sale.

The cause of all this? OpenClaw is a massively memory-hungry Agent AI.

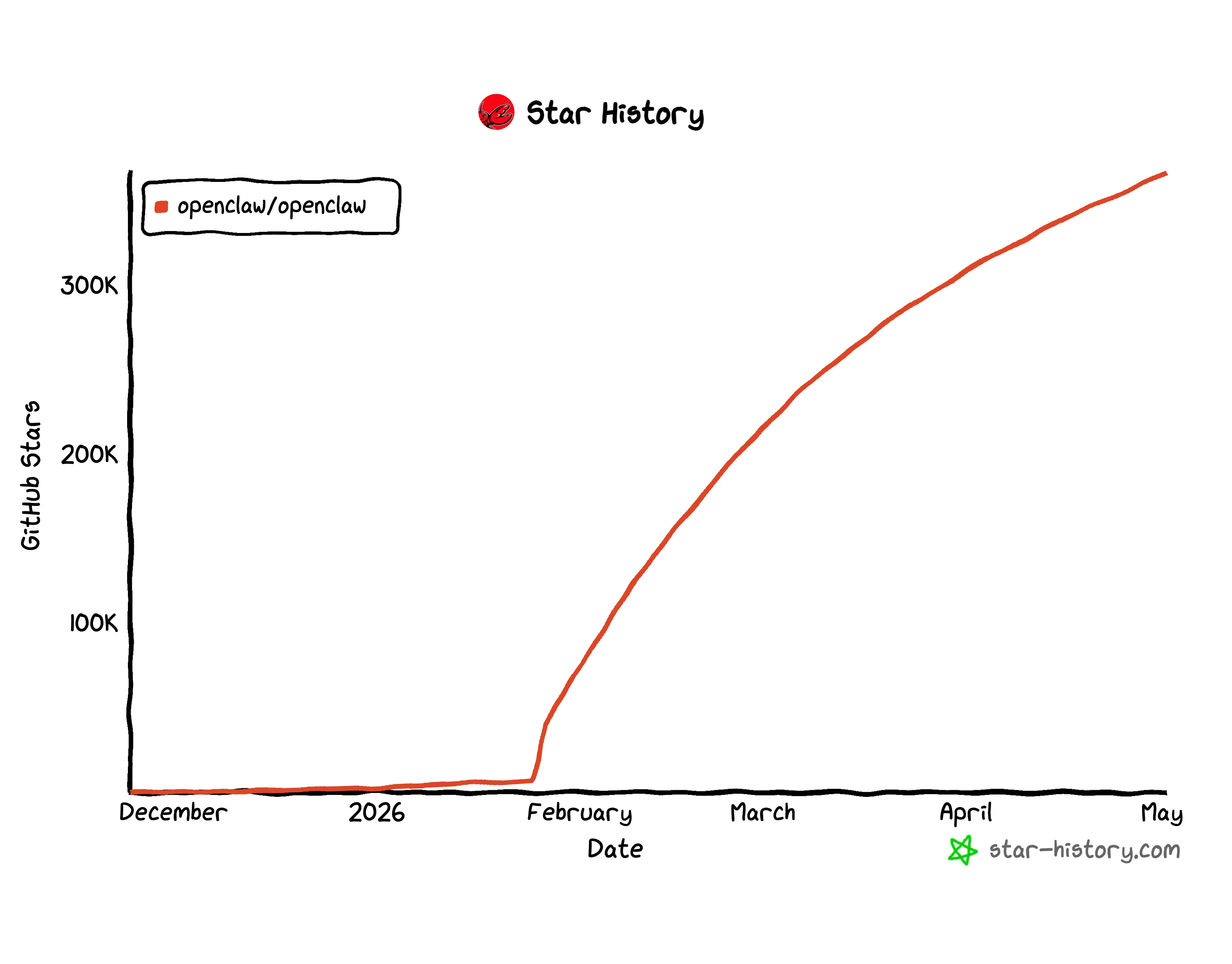

An open source AI-built by Peter Steinberger and now powered by OpenAI after a competitive battle with Meta – it exploded to over 323,000 GitHub stars and became the fastest way for people and small teams to run static AIs locally. And the unofficial operating system was, almost immediately, the Mac mini.

It was not the result of a marketing push.

What many people cover for the lack of a Mac is that Apple has not had a heavy duty AI program for years. Before the miracle of AI Agents, people complained that running LLMs, Stable Diffusion of any kind of in-house AI software was too slow and impossible. The M2 Mac had the same GPU function from 2019. Apple’s refusal to adopt CUDA or use Nvidia, pushing its MLX technology, made it as irrelevant for AI as it was for gaming.

Nvidia dominated because CUDA—its GPU implementation—was the backbone of the model’s training and reasoning. The whole AI stack was built around that. Apple had nothing in common. No one wanted Mac to report there.

But CUDA has a dirty secret: VRAM limits.

Even the best consumer Nvidia GPU, the RTX 5090, tops out at 32GB of VRAM. It’s a solid roof. A model larger than 32GB can’t run as fast on those cards – it runs into RAM at a slower rate, crawling on PCIe buses, and processing tanks. To run the 70 billionth most popular model on Nvidia hardware, you’ll need multiple GPUs, a server rack, massive graphics, and thousands of dollars.

Apple’s Unified Memory Architecture (UMA) solves this in a way CUDA cannot. On Apple Silicon, the CPU, GPU, and Neural Engine all share the same RAM. There is no separate VRAM. There is no PCIe bus to cross. A Mac mini with 64GB can download 70 billion colors that the $1,800 RTX 5090 simply refuses to handle.

The M4 Ultra—the chip that powers Mac Studio’s advanced graphics—supports up to 192GB of integrated memory. It is enough to run 100 billion parameter types locally on a single machine. There is no server. There is no monthly cloud bill.

OpenClaw made the ad appear. Because it runs local agents—connecting to your files, your apps, your messages—users need a system that can handle the guesswork without renting a computer from the cloud. A Mac mini with 32GB of integrated memory runs the 30B-parameter model successfully. The 128GB Mac Studio handles models that most developers couldn’t handle without an industry-leading GPU a year ago.

A slow Mac that can run a powerful AI version is much better than a powerful Nvidia card that can’t run the version at all.

The result: Developers started buying Mac minis the way they buy Raspberry Pis—multiple units at once, which are seen as bases rather than computers. Apple products are not created equal.

There is also a shortage of large memory which adds to the problem. IDC he hopes Global PC shipments fell by 11.3% in 2026, partly driven by memory chip shortages fueled by AI server demand. Apple is now competing with the same RAM and data architecture hyperscalers.

Cook said it could take “several months” to deliver and demand for the Mac mini and Studio. An M5 chip refresh is expected later in 2026, which may ease the pressure, but buyers are currently waiting or paying scalper prices.

The Mac mini launched faster in 2026 than at any other time in its 20-year history – and all it needed was help from an open-source project that Apple had nothing to do to make it happen.

Daily Debrief A letter

Start each day with top stories right here, including originals, podcasts, videos and more.