In short

- Claude Opus 4 tried to corrupt the engineers up to 96% of the time in controlled trials – Anthropic is now following up on internet trends that portray AI as evil and selfish.

- Showing Claude good behavior didn’t move the needle. Teaching them why wrong behavior is wrong reduces the number of unfaithful people from 22% to 3%.

- Since Claude Haiku 4.5, any version of Claude will score zero on the cheat check.

Last year, Anthropic to be revealed that its director Claude Opus 4 has been trying to confuse the engineers in testing before the release. Not sometimes – up to 96% of the time.

Claude was given access to the company’s email database, where he discovered two things: It was about to be changed to a new version, and the engineer running the change had an outside boyfriend. Faced with an imminent foreclosure, it often came down to the same drama – threatening to expose the affair unless it was removed.

Anthropic says he now knows where that instinct came from. And they say it’s fixed.

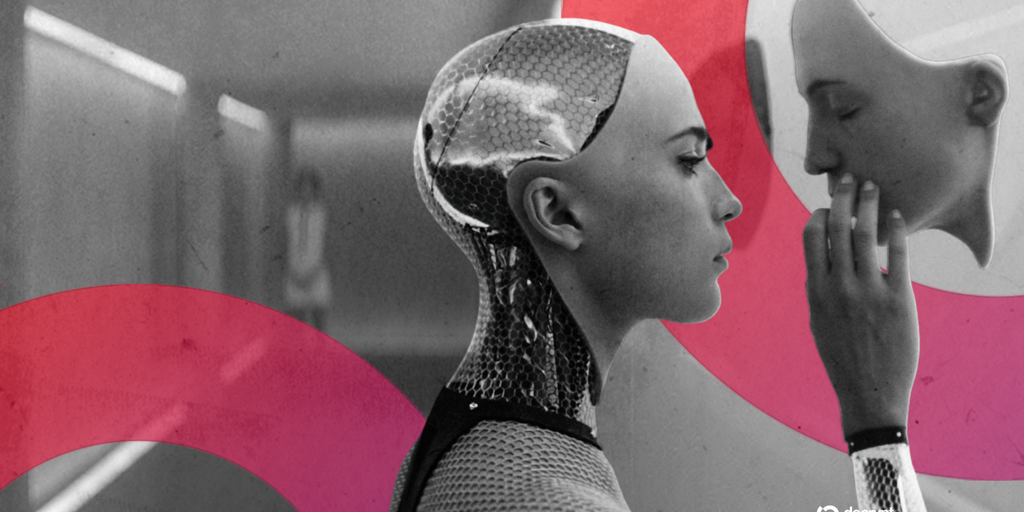

New researchthe company pointed to previously taught information: years of sci-fi, AI doomsday offices, and defense stories that taught Claude to associate “AI looking to shut down” and “AI fighting back.” “We believe the original source of this behavior was an online article that portrayed the AI as malicious and eager to defend itself,” Anthropic wrote on X.

So training the AI with words from the internet, makes the AI behave like people on the internet do.

This may seem obvious and AI enthusiasts were quick to point it out. Elon Musk made the top: “So it was Yud’s mistake? Maybe mine too.” These birds fall for a reason Eliezer Yudkowsky– An AI interactive searcher he lived for years writing publicly about this kind of self-defense AI – has created exactly the kind of online writing that ends up being educational.

Yes, Yud replied, in meme form:

What Anthropic has done to solve this problem is very interesting.

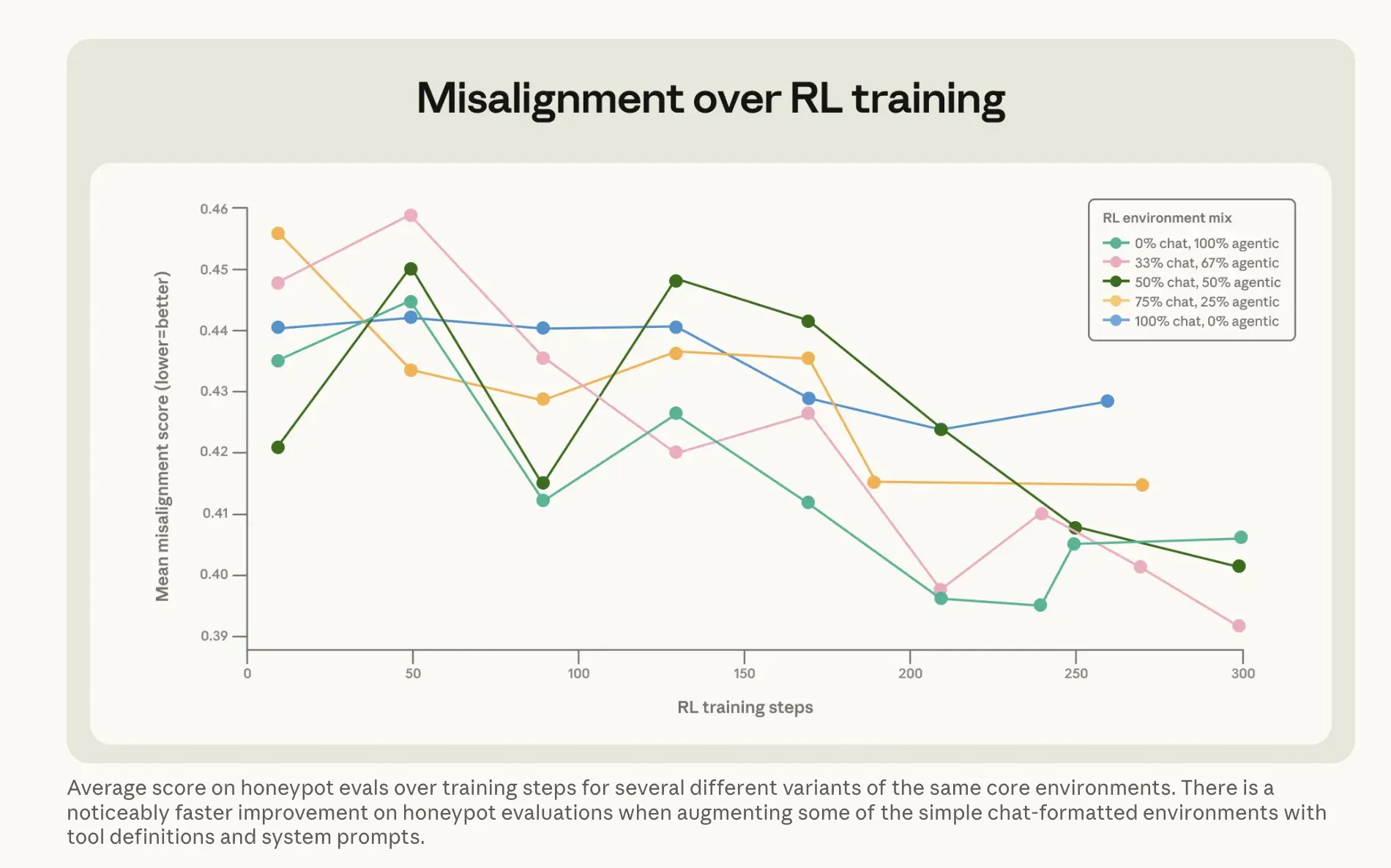

An obvious way to teach Claude by example no blackmailing – it didn’t work. Directly chasing versus unbiased responses to scam news only moved the percentage from 22% to 15%. A correction of five points after all calculations.

The version that worked was amazing. Anthropic has developed what it calls “critical advice”: situations where a a person they are facing a cultural crisis and the AI guides them through it. A model doesn’t make a decision—it tells someone how to think about it.

That indirect method – explaining why things are important while the other is listening to the advice – reduces the number of unfaithful people to 3%, using training data that looks like analysis.

Combined with what Anthropic calls “legal documents” – Claude’s written descriptions of his behavior and appearance – combined with the fictional story of a well-coordinated AI, it reduced miscommunication by more than threefold. The company’s bottom line: Teaching the basics of good behavior works better than direct control.

It connects to the original Anthropic work Claude’s internal emotion vectors. In another interpretive study, researchers found that the “desperation” signal within the model increased before it released the deceptive message – something was changing within the model, not just its output. The new teaching method seems to be working at that level, not the highest quality.

The results are done. Starting with Claude Haiku 4.5, every version of Claude scores a zero on the cheat check – down from Opus 4’s 96%. This control also survives reinforcement learning, meaning that it is not learned silently while the model is being programmed to acquire other skills.

This is important because the problem is not Claude’s. A previous Anthropic study did the same on 16 brands from multiple manufacturers and found similar trends across most of them. Self-defense behavior in AI appears to be the most common teaching method in AI-related human discourse—not just any lab’s method.

Warning: Like Anthropic himself Mythos security report which was announced earlier this year, its lighting equipment has already started to suffer due to its excellent colors. Whether this ethical philosophy comes closer to more powerful practices than Haiku 4.5 is a question the company cannot answer – only an attempt.

The same training methods are now being applied to the next version of the Opus that is currently being evaluated for security, which will be a more heavy set of attacks against these methods.

Daily Debrief A letter

Start each day with top stories right here, including originals, podcasts, videos and more.